March 2026: More control over your semantic layer, more places to use it

As Mosaic adoption grows across the enterprise, two questions come up more often: how do you connect your semantic layer to the growing ecosystem of AI tools, and how do you maintain model quality as more teams contribute? March 2026 addresses both by making it easier to extend governed semantic context into AI tools, catch model issues earlier, and improve how trusted content is organized, discovered, and shared.

This month’s highlights include Direct Mosaic Model Access via MCP, Mosaic Model Versioning with GitHub Integration, built-in time intelligence, and model validation in Mosaic Studio.

AI: Extend governed semantic context into AI tools

Direct Mosaic Model Access via MCP

AI tools are multiplying across the enterprise. Most still lack access to the governed business definitions your analytics teams have spent years maintaining. With this release, Mosaic models can meet those tools where they already are.

Organizations can now connect Mosaic models directly to a growing ecosystem of MCP-compatible AI tools, including Claude, Gemini, Copilot, and ChatGPT, without duplicating business definitions outside Mosaic. Users can discover semantic objects, retrieve governed model details, and ask natural language questions against definitions already in use across the business.

Instead of rebuilding metric logic for every AI assistant that gets deployed, teams can extend the same trusted semantic foundation they already have. That means more consistent definitions, authenticated access, and governance built in from the start. It is a more scalable path to enterprise AI, using what teams have already built in Mosaic.

Mosaic: Build, validate, and version semantic models

Mosaic Model Versioning with GitHub Integration

As more teams build and refine semantic models, managing change becomes just as critical as building the model itself. Until now, tracking who changed what, comparing versions, or recovering from a bad publish required workarounds. That changes with this release.

Mosaic now integrates natively with GitHub, storing model definitions as text-based objects that work with standard Git workflows. Admins and model contributors can track changes across the full lifecycle, compare versions side by side, branch for isolated development, and restore prior states without disrupting what's already in production.

This gives teams a cleaner path to production: structured reviews, repeatable release processes, and real rollback when things go wrong. For organizations scaling semantic adoption across multiple teams, this is the operational discipline that makes semantic development at scale sustainable.

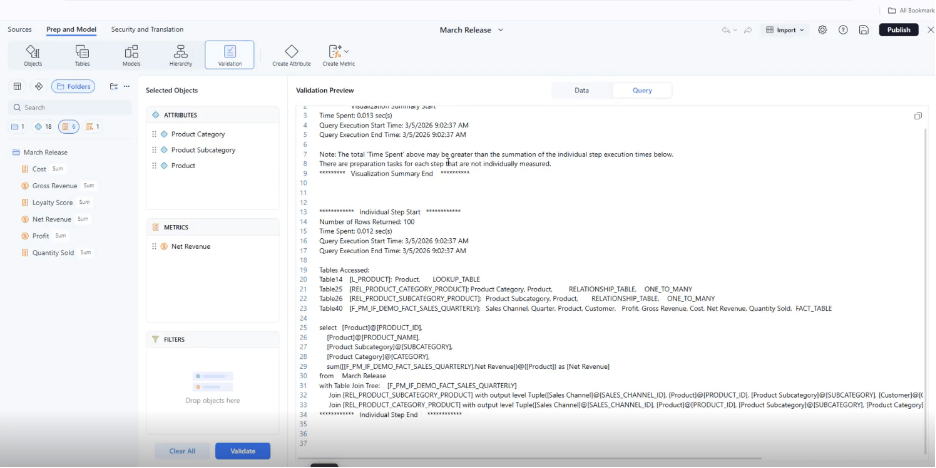

Validate Mosaic Models Earlier in Mosaic Studio

Publishing a broken model wastes time for everyone. Model Validation in Mosaic Studio helps authors catch problems before they reach production.

Directly in the modeling interface, authors can inspect sample data, review the generated query, identify join issues, catch metric logic errors, and confirm aggregation behavior. Security filters are respected, and no dashboard build is required.

So a broken join gets caught in Studio during a five-minute check, not later in the cycle when downstream users are already relying on the dashboard. Model authors iterate faster, troubleshoot earlier, and publish with more confidence. The build-test-publish cycle gets shorter for everyone downstream.

Built-In Time Intelligence for Mosaic Models

Year-to-date, quarter-to-date, month-to-date, and week-to-date comparisons are among the most common requests in analytics. They are also a common source of duplicated logic, inconsistent metrics, and semantic sprawl across models.

Model authors can now enable built-in time intelligence directly in Mosaic Models. Mosaic generates the corresponding time-based metrics automatically, without custom SQL or extra semantic objects to maintain.

So when finance needs QTD revenue versus the prior period, the metric is already there. No ticket to the modeling team, no custom SQL, and no waiting. Teams spend less time rebuilding the same comparisons, and consumers get more consistent time-based insights across every dashboard that uses the model.

BI: Find data faster, share outputs with more context

Custom Grouping and Reordering in Dashboard Dataset Panels

Large datasets are hard to navigate when attributes and metrics are spread across a flat, unorganized list. Authors can now group and rearrange objects inside dashboard dataset panels by business domain, workflow, or hierarchy. That structure is saved within the dashboard, so every creator starts from a logical, organized view rather than rebuilding their own sense of the data.

The result is a cleaner authoring experience and faster navigation through complex datasets. So a new analyst onboarding to a 200-object dataset can find the right metric in seconds instead of asking a colleague which one to use.

Smarter Content Discovery in Library Web

In large content libraries, not all search results are equally trustworthy. A rarely-used report showing up alongside a widely-adopted dashboard slows down users who need reliable content fast.

Library Web search now factors in usage data. Frequently accessed content ranks higher, while content with a history of errors surfaces lower. Users can also see at a glance how widely a report is used before opening it. That means when someone searches for a revenue report, they find the one 400 people rely on, not the draft someone abandoned six months ago.

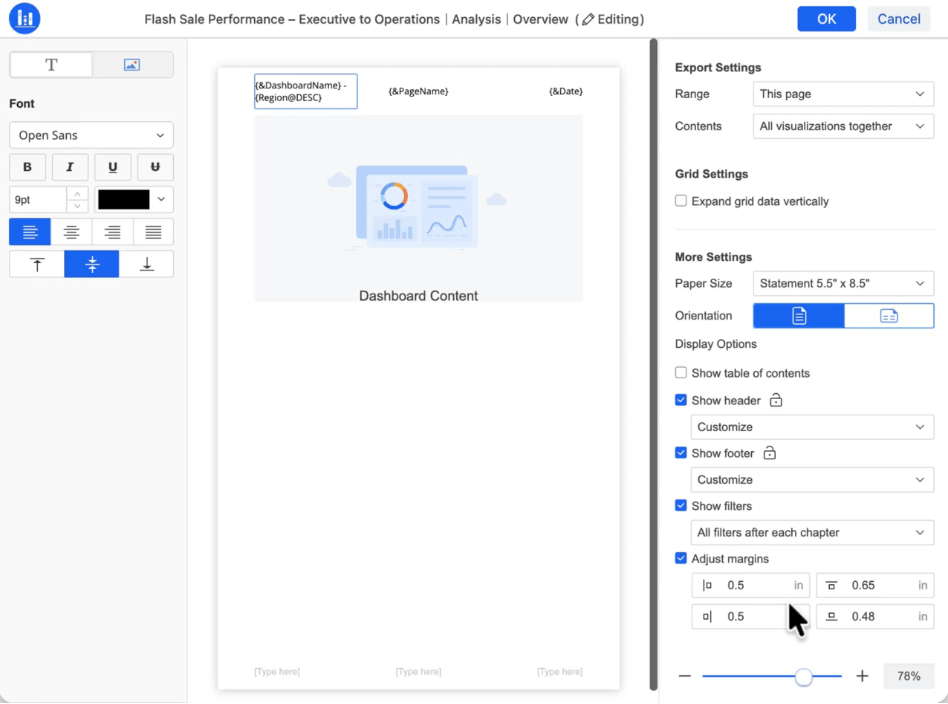

Attributes and Metrics in PDF Headers and Footers

Exported reports often lose context once they leave the dashboard. Attributes and metrics can now appear directly in PDF headers and footers, so region names, key figures, or filter values travel with the report when it is shared. That makes exported reports more self-explanatory and reduces follow-up questions when PDFs circulate beyond the original dashboard audience.

Together, these March 2026 updates make it easier for teams to trust what they build, find what they need, and extend governed context into every tool their organization uses. From versioning model changes in GitHub to querying governed definitions from MCP-compatible AI tools, the result is less rework, fewer broken experiences, and faster time to insight, without giving up consistency or control.

Learn more about these March updates on our What's New page and product documentation.