How to Evaluate a Semantic Layer: The 7 Criteria That Actually Matter

Most evaluations start with feature lists. The ones that succeed start with architecture.

Most semantic layer evaluations fail before the vendor is even chosen. Buyers run them like software trials when they are really making long-lived architecture decisions.

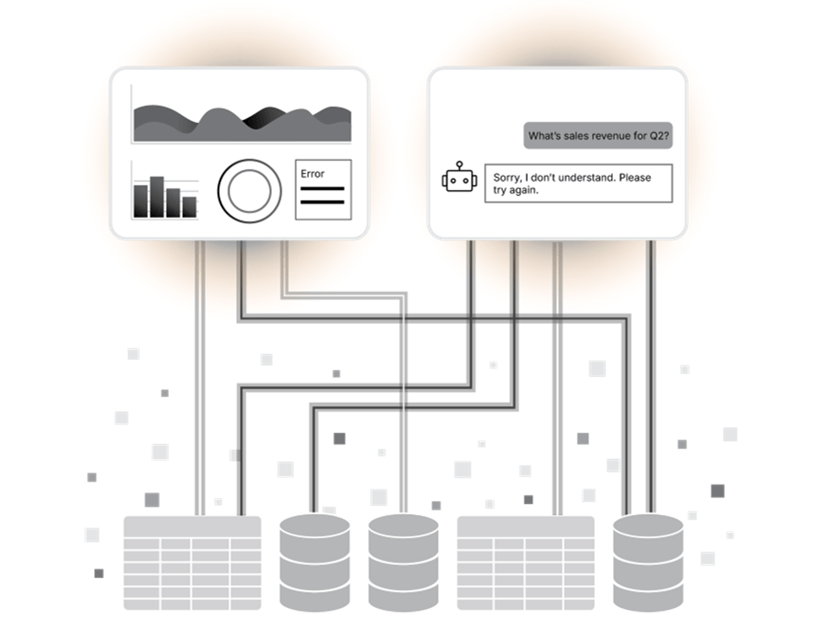

That mistake shows up later as KPI disputes that never fully disappear, security rules that have to be recreated in every tool, AI pilots that stall on governance questions, and teams that discover too late that their business logic is trapped inside a single platform.

A good semantic layer should create customer value in very practical ways: fewer arguments about numbers, less manual reconciliation, faster time to insight, more trustworthy AI answers, and less risk when the stack changes. That is why the right evaluation is not about who has the best demo. It is about who can prove their architecture will hold up under real enterprise conditions.

95% of data leaders say defining metrics consistently across tools is a challenge. That is exactly why semantic layer evaluations should be measured on architectural durability, not feature theater.

The most expensive mistake buyers make

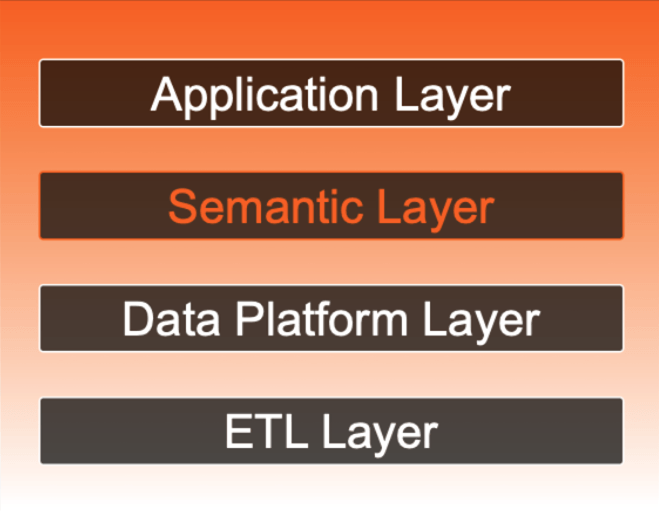

Platform-native semantic layers solve real problems. But most of them stop at the platform boundary.

When a warehouse or BI tool adds semantic features, it is often solving a genuine problem: metric inconsistency within the platforms it controls. The problem is what happens next. Add a second BI tool. Add a second warehouse. Add an AI agent that needs governed access to the same definitions. Suddenly the perimeter becomes the limitation. Definitions do not travel. Governance does not extend. Teams start rebuilding logic in new places.

This is not an argument that warehouse-native or BI-native semantic features are bad. It is an argument that a useful feature is not automatically an enterprise semantic foundation. The real question is not "Does this vendor have a semantic layer?" It is "Does this semantic layer survive when our stack changes?" And in most enterprises, the stack will change.

The question is not "Does this vendor have a semantic layer?" It is "Will this semantic layer still work when our stack changes?"

What a real evaluation should prove

Before going criterion by criterion, it helps to define the outcome. A real semantic layer evaluation should prove four things:

- The same governed metric stays consistent across more than one consumer, not just one preferred tool.

- Security and governance policies follow the data across dashboards, notebooks, applications, and AI interfaces.

- The platform can deliver an initial governed domain fast enough to create internal momentum and keep executive sponsorship.

- The architecture can absorb change without forcing a full rebuild of business logic the next time a warehouse, BI tool, or AI surface changes.

Those are the outcomes the seven criteria below should help buyers test.

The 7 criteria that separate enterprise-grade from everything else

These criteria come directly from the 2026 Enterprise Semantic Layer Buyer's Guide. The emphasis here is practical: not what each criterion means in theory, but what it requires in a live vendor environment.

1. Platform independence and real federation

Enterprise data stacks do not stand still. Warehouses change. BI strategies evolve. New AI interfaces show up faster than governance teams can rewrite policy. A semantic layer that stores business logic inside one warehouse or one BI tool may feel convenient at the start, but it creates an expensive rebuild later.

Real platform independence means business definitions, security policies, and metrics survive platform change intact. Not "mostly portable." Not "portable with some manual translation." Intact. That matters because the customer value here is durability: less lock-in, less rework, and less risk every time the architecture evolves.

In the Strategy and CIO Dive Studio research, 66% of data leaders said the ability to switch BI tools without rebuilding definitions matters to them, and 49% said the same about switching warehouses.

Test this: Ask for a customer example where the vendor supported a warehouse or tool migration while the semantic layer remained intact. Then ask exactly what had to be rebuilt and what did not.

2. AI-powered modeling and data readiness

Traditional semantic modeling can be slow because every object, relationship, and metric has to be assembled by specialists. That is manageable for a narrow proof of concept. It is a drag on time to value at enterprise scale.

AI-assisted discovery, relationship detection, and metric generation can compress weeks of effort into days, but speed alone is not the point. The stronger platforms also surface data quality issues early so teams do not build polished semantic models on top of broken inputs.

The result is faster launches, fewer surprises in production, and a better chance of showing a governed domain quickly enough to keep stakeholders engaged. Eighty-three percent of data teams already use AI to accelerate data modeling.

Test this: Show schema discovery, relationship detection, metric creation, and data quality validation end to end. Ask where a human stays in the loop and where the system should take over.

3. Semantic depth and richness

There is a meaningful difference between a metric layer and a semantic layer. A metric layer can define calculations. A true semantic layer has to handle complex hierarchies, fiscal calendars, non-additive measures, reusable business context, and rich metadata that humans and AI systems can reason over.

This matters because shallow semantics limit reuse. They also limit trust. If an AI assistant can only see a thin layer of simple aggregations, it will sound helpful without actually understanding how the business works. Rich semantics improve consistency across teams and make AI outputs more grounded.

Test this: Ask the vendor to show a ragged or unbalanced hierarchy, fiscal time intelligence, and the metadata that explains the object well enough for an AI interface to answer accurately.

4. Open standards and universal access

A semantic layer that only works well with one set of tools recreates the fragmentation problem it claims to solve. It just does it more slowly.

Every serious consumer should be able to query the same governed definitions through standard interfaces: BI tools, notebooks, embedded applications, spreadsheets, and AI agents. The goal is a bridge, not a moat. Definitions should be created once and reused broadly without rebuilding logic for every new interface.

This is also where open interoperability matters. Buyers should ask about practical support for exchange standards such as Open Semantic Interchange and whether the vendor is designing for a multi-tool future or trying to keep the model captive inside one ecosystem.

Test this: Ask to see the same governed metric consumed by two BI tools, a notebook, and an AI interface in one workflow with the same result and the same security model.

5. Active security and governance

This is where many evaluations go too shallow. Traditional governance was built for static reporting: define permissions, publish dashboards, assume the policy holds. That does not describe the AI era.

Governance now has to travel with the meaning of the data, not just its storage location. That means row-level security, column-level security, and role-based access defined once and enforced consistently at query time across every surface. Not per tool. Once.

In practice that means lower compliance risk, fewer policy gaps, and no duplicated security work as new interfaces are added. Eighty-two percent of leaders rate governance and observability as very or extremely important.

Test this: Show the same row-level policy applied to a BI user, a notebook user, and an AI agent with no per-tool duplication. Then show the audit trail.

6. AI agent readiness

Three years ago, most buyers did not evaluate semantic layers for AI agents. Now they should.

The critical question is not whether an AI agent can access data. It is whether that access is authenticated, permission-aware, executed against shared business logic, and logged before a result is returned.

The UserEvidence study reported 22% fewer AI hallucinations and 28% faster AI deployment with a governed semantic layer in place. That makes AI readiness an operational criterion, not a future-facing bonus.

Test this: Ask the vendor to show a denied AI request, not just a successful one. Buyers should see the policy decision, the user context, and the audit log entry in a live environment.

7. Performance, scalability, and cost

This is the criterion buyers leave too late, and it is often the one that decides adoption. A semantic layer that cannot stay fast under production conditions will not become the governed foundation the business actually builds on.

Performance should be tested under realistic concurrency: dashboards, ad hoc analysis, and AI requests hitting the system at the same time. Cost should be tested too. A pricing model that punishes broad usage defeats the purpose of a governed semantic foundation.

The customer value here is adoption at scale. Teams use what is fast, reliable, and affordable enough to become a habit. The UserEvidence study reported a 20% decrease in downstream compute costs after deploying a governed semantic layer.

Test this: Ask for realistic p95 latency, concurrency assumptions, production-sized data volumes, and a clear explanation of what happens to price as query volume grows.

Why buyers should evaluate these together

The biggest mistake after feature-first evaluation is checkbox evaluation. These criteria are not independent. A platform can look strong on modeling and fail on portability. Another can look strong on governance inside its own environment and fail the moment a second BI tool or AI surface enters the picture. Another can look fast in a clean demo and collapse under realistic concurrency.

That is why semantic layer evaluations should be built around proof, not slideware. The winning vendor is the one that can prove it all holds together — not separately in clean demos, but together, on real data, under realistic conditions.

The biggest risk is not the wrong vendor. It is building around the right vendor so slowly that the business stops believing before the value arrives.

Where to start

The fastest path to a credible proof of value is not to model everything. It is to pick the one metric that causes the most friction and use it to test the architecture.

- Choose a high-conflict KPI such as revenue, customer count, or active users.

- Define it once in the semantic layer and validate parity across at least two consumer surfaces.

- Apply the same security policy to a dashboard user, a notebook user, and an AI interface with no duplicated policy work.

- Document the before-and-after in reconciliation effort, trust, and time to answer. That becomes the internal business case.

Frequently Asked Questions: Evaluating a Semantic Layer

What is the most important criterion when evaluating a semantic layer?

Platform independence is the best starting point because it reveals whether the semantic layer is a durable architectural asset or just a convenient feature inside one platform. In practice, the real answer is how well portability, governance, AI readiness, and performance hold together.

Can a warehouse-native semantic layer work for the enterprise?

It can be a useful feature, especially in a tightly controlled environment. But once the enterprise spans multiple warehouses, tools, and AI interfaces, buyers should test very carefully whether definitions, security, and governance still travel without rebuilds.

How should buyers test semantic layer governance for AI?

Do not stop at a successful AI query. Ask the vendor to show a denied request, the policy evaluation, the user context, and the audit trail. That is where real production readiness becomes visible.

How long should a semantic layer proof of value take?

The first governed domain should show value in weeks, not in a long transformation program. Buyers do not need a fully modeled enterprise to evaluate the architecture. They need one meaningful domain that proves consistency, governance, and speed.

What are the key features of an enterprise semantic layer?

An enterprise semantic layer should provide platform-independent business definitions, unified governance across all data consumers, AI-ready metadata, real-time security enforcement, and the ability to survive technology stack changes without rebuilding business logic.

If those questions are live in your organization, the 2026 Enterprise Semantic Layer Buyer’s Guide was built for exactly that stage of the evaluation. It includes the full checklist, vendor questions, and a proof-of-value blueprint designed to get a first governed domain into production in six to eight weeks.